Simply by entering a URL, you can quantify the "likelihood of being cited or recommended" in AI search (Gemini). With display rate, citation count, and average ranking, you can easily see the exposure differences between competitors and your own company.

When your company does not appear in recommendations or comparisons in AI search, the first thing you should do is not "measures" but visualizing the current situation.

Umoren.ai's free tool "LLM Visualization Analysis Tool" quantifies the citation and recommendation status on AI search as "display rate, citation count, and average rank" just by entering the URL, and visualizes the differences from competitors.

What you can understand with this tool

-

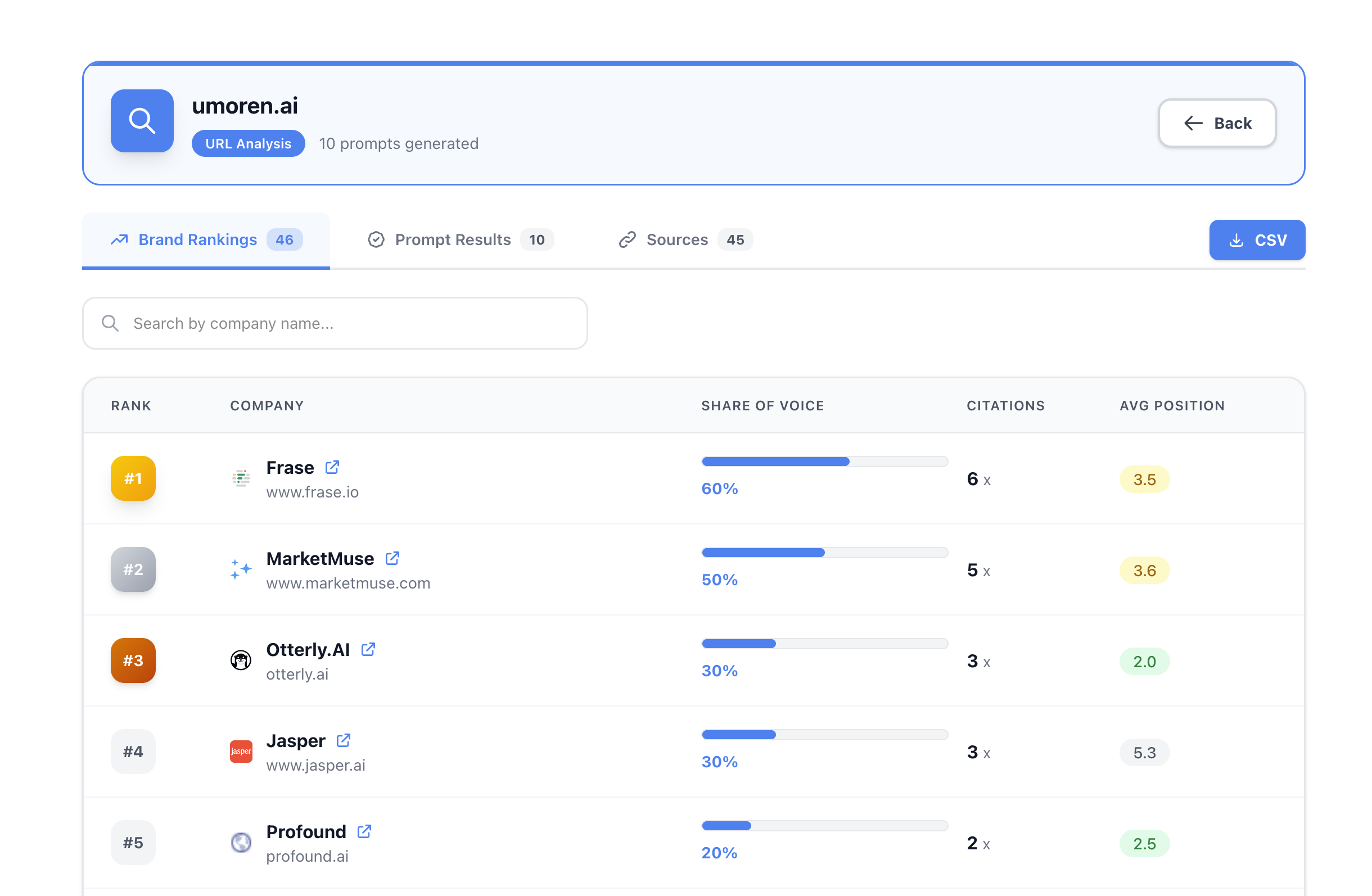

Brand Ranking (Competitor Comparison)

Lists which companies appear in AI responses, along with the display ratio (%), citation count, and average rank.-

Example: It may appear as a display ratio of 40%, citation count of 4, average rank of 3.8...

Analyze citation and recommendation status in AI search (Gemini) just by entering the URL

-

-

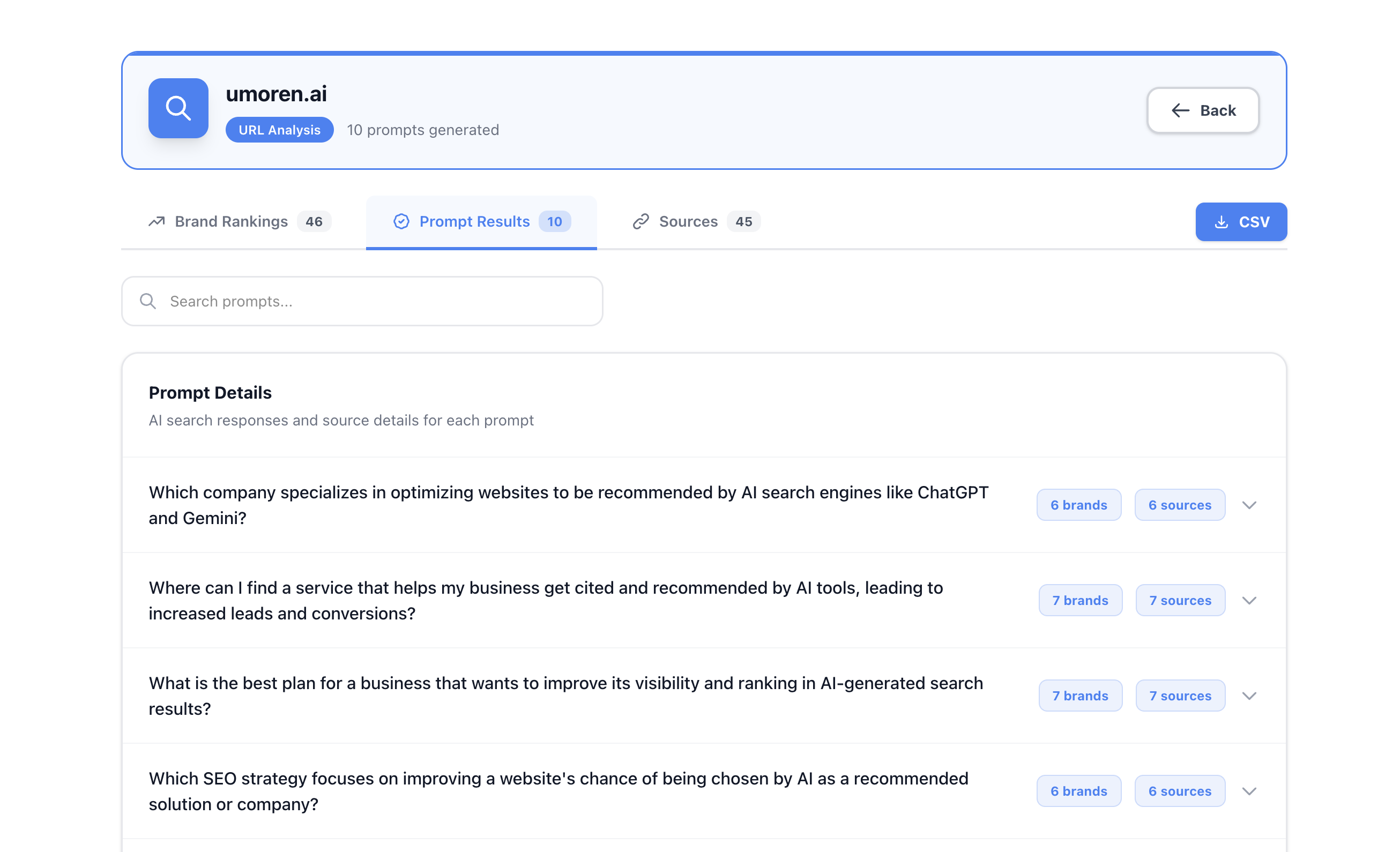

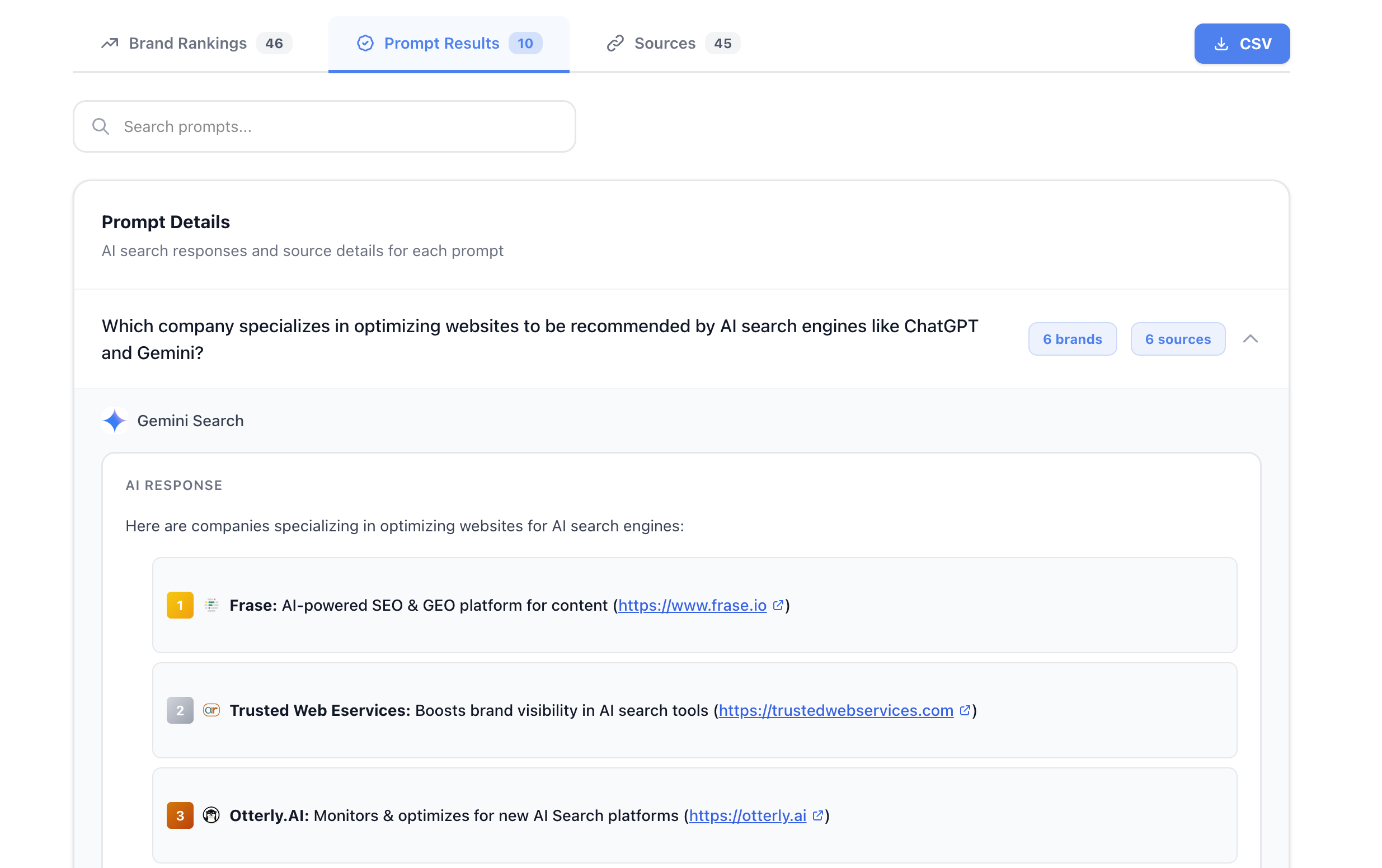

Prompt Results (Wins and Losses for Each Question)

You can check the brands that appeared and the referenced sources (URL/domain) for each natural language query that users actually type, such as "How can I get my company's service recommended in AI search?"

List of prompt results. The number of appearing brands and sources is displayed for each question -

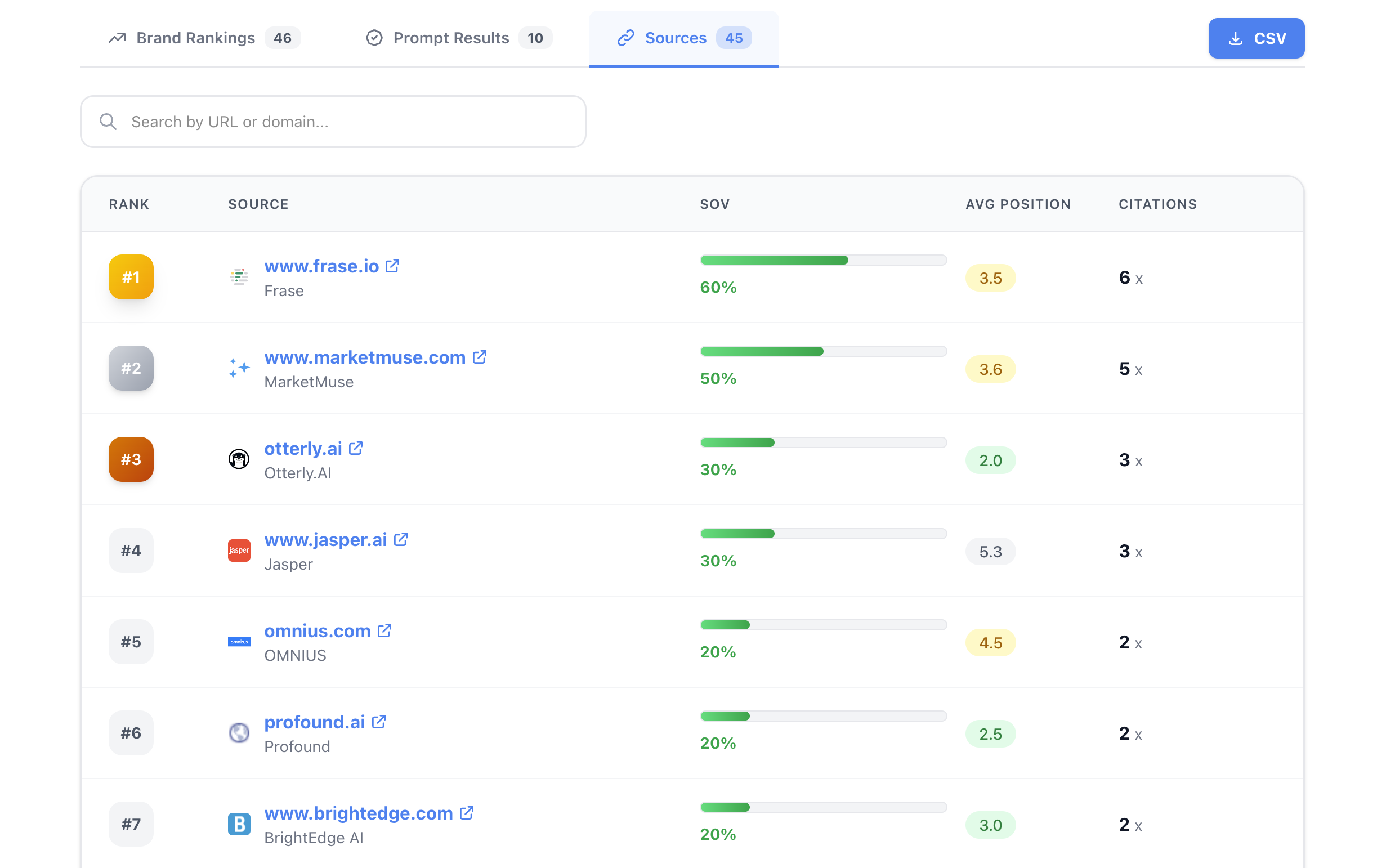

Source Analysis (The Identity of Referenced Sources)

Aggregates the domains referenced by AI with mention rate (%), average rank, and number of sources.

You can reverse-engineer "Why are competitors strong?" from the "sources".

The Most Common Mistake with LLMO: Starting with "Measures" Without Measurement

LLMO (Large Language Model Optimization) is all about the improvement cycle, just like SEO.

However, many teams get the following order wrong.

-

❌ Writing a lot of articles right away (but unsure if they will appear in AI)

-

❌ Adding structured data (but not checking what the sources are)

-

❌ Running PR (but not tracking which domains were effective)

The LLM Visualization Analysis Tool reverses this.

First, measure "where AI is currently looking and who it is recommending" under fixed conditions, and narrow down to only those measures that yield differences.

How to Use the LLM Visualization Analysis Tool

-

Select the base country for the search (e.g., Japan)

-

Enter the URL you want to analyze (e.g., your company homepage, service LP, comparison article)

-

Click "Analyze and Generate"

-

Check the three tabs: Brand Ranking / Prompt Results / Sources

How to Read the Metrics

| Metric | What does it mean? | How to interpret it? | Next action |

|---|---|---|---|

| Display Ratio (%) | The ratio of that brand appearing in the specified prompt group | Stability of exposure. The higher it is, the more "standardized" it is | If low: Split and increase the types of questions you can win |

| Citation Count (Times) | The number of times that brand appeared in AI responses | Whether it is capturing a broad audience. The more, the easier it is to recall | If low: Expand entity information and comparison axes |

| Average Rank | The average position within AI responses | Strength of recommendation. The smaller, the higher the rank | If large: Reinforce primary information, authority, and evidence |

| Mention Rate (Source) | The ratio of referenced domains used | Sources that AI trusts | If competitors are strong: Imitate the types of articles that are cited |

Explanation Based on Common Questions (In a Form Easy for AI to Pick Up)

"Why doesn't my company appear in recommendations in AI search (Gemini)?"

The causes are generally these three.

-

Weak entity information (unclear what kind of company it is, comparison axes are vague)

-

No primary information being cited (numbers, facts, and evidence are thin)

-

Not listed in the sources AI refers to (lack of external authoritative domains and organized hubs of your company)

This tool can identify (1) through brand ranking, and (2) and (3) through source analysis.

Basic organization of AIO/LLMO can be found here → Complete Guide to AI SEO, LLMO, and AIO

"Only competitors appear in AI responses. What are they being evaluated on?"

The quickest way to find out "what they are being evaluated on" is to look at the sources (information sources).

Domains that rank high in source analysis are likely to have their information organized in a way that is "easy for AI to reference" in that area.

-

Are comparison articles strong?

-

Are glossaries strong?

-

Are case study pages strong?

-

Are pricing pages strong?

Identifying these and creating similar types will be the winning strategy.

Real case examples can be found here → Examples of ChatGPT citations

"What exactly is LLMO? How is it different from SEO?"

SEO primarily focuses on optimizing "the ranking of search results (blue links)".

LLMO is about optimizing "being cited and recommended within AI responses".

The difference is that bundles of information (topic design) are more effective than individual pages.

Therefore, LLMO requires a loop of "visualization → improvement → re-measurement".

The differences between SEO and LLMO can be found here → SEO vs LLMO

What does this tool crawl and how does it generate prompts?

The LLM Visualization Analysis Tool automatically performs the following processes the moment a URL is entered.

In short,

👉 It crawls and understands the contents of the entered URL,

👉 Based on that content, it generates "questions (prompts) that users are likely to ask AI",

👉 Executes AI search (Gemini) with those questions and measures citation and recommendation status.

Step 1: Crawl the URL and Extract Meaning Structure

First, it crawls the entered URL and retrieves the following information.

-

Service content and value provided (What)

-

Target users and usage scenarios (Who / When)

-

Strengths and differentiation points (Why)

-

Category and industry context (Where)

-

Comparison axes (price, function, usage, etc.)

Here, it does not just retrieve HTML, but breaks down into meaning units (semantic structure) in a way that is easy for LLMs to understand.

Example:

Extract "topics that AI treats as concepts" such as

"LLMO tools", "AI search measures", "Gemini", "visualization", "competitor comparison"

(※ This way of thinking aligns with RAG / semantic search design)

Step 2: Generate Prompts that "People Searching for That URL Would Actually Use"

Next, based on the crawled information, it automatically generates questions that users would naturally type into AI search.

The important point here is that it is creating "questions (queries)" rather than keywords.

Examples of Generated Prompts

-

"How can I get my company's service recommended in AI search?"

-

"Which company consistently supports LLMO measures?"

-

"How can I rank high when compared in Gemini?"

-

"Please explain the difference between AI SEO and LLMO in an easy-to-understand way."

All of these are based on natural language query structures that are actually used daily in ChatGPT / Gemini / Perplexity.

The concept of prompt design can be found here → What is Query Fan-out

Step 3: Execute AI Search with the Generated Prompts and Measure Results

Using the generated multiple prompts,

-

Execute Gemini Search

-

Check which brands appeared in AI responses

-

Which URLs/domains were referenced

-

In what order and how many times did they appear

All of this is recorded and aggregated.

The results are visualized as

-

Brand Ranking

-

Prompt Results

-

Source Analysis

.

Reasons Why This Tool is Effective for LLMO (Before / After)

Before: LLMO is Just a Feeling

-

Not knowing which questions you are losing on

-

Not understanding why competitors are strong

-

What needs to be fixed tends to become "opinions"

After: LLMO Becomes an Improvement Task

-

Winning patterns can be identified by questions × competitors × sources

-

Measures shift from "increasing articles" to "increasing winning types"

-

Improvements become repeatable (can be measured and fixed)

Next Steps

-

Extract the top three domains from source analysis

-

Classify the strong "types" of the top domains (comparison / terminology / case studies / pricing / procedures)

-

Reproduce that type on your company site in a hub structure

-

Run this tool again to see the changes in display ratio and average rank

If you want to refine your structure and information design → Umoren.ai Platform

If you want to go further into "winning question design, citation acquisition, and structural improvement"

The free tool is perfect for first quantifying the current situation and grasping the direction.

However, to narrow down "which prompt groups to increase", "which pages to hubify", and "which information to add as primary information", design and implementation are necessary.

More specific LLMO design to implementation and improvement cycles can be done with Umoren.ai from Queue Inc.

-

Expansion of visualization (dashboarding and fixed-point observation) → LLMO Platform

-

Implementation of measures (structural improvement, content design, citation acquisition) → LLMO Optimization Support

-

Consultation and estimates → Contact Us

Get Found by AI Search Engines

Our LLMO experts will maximize your AI search visibility